Google Bard vs. ChatGPT

Google has just announced Its big arrival in the AI space of language models, with “Bard” which is based on “LaMDA” which Google has been using for Google Assistant. This new model is set to rival OpenAI’s ChatGPT which is backed by Microsoft (Google’s ages-old rival), aiming to improve customer interactions and support for businesses.

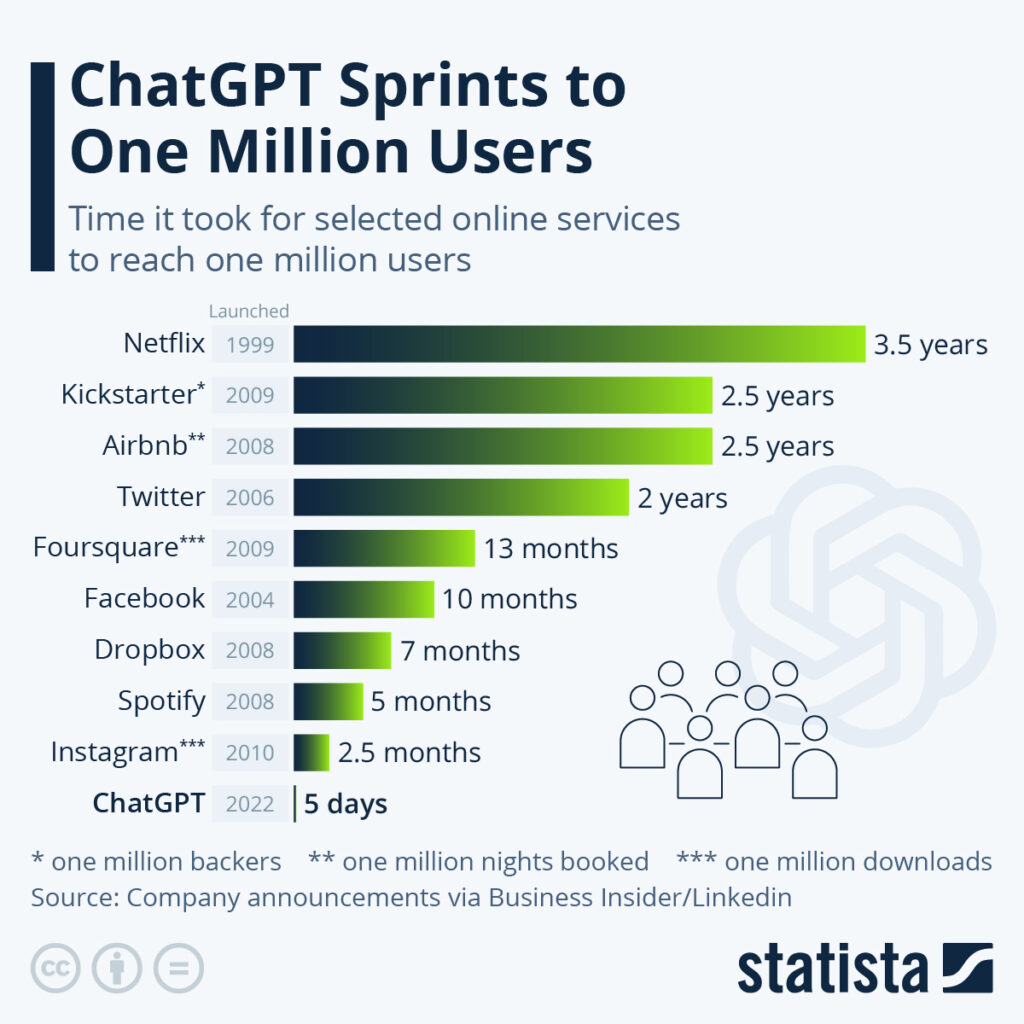

When ChatGPT was launched and created a storm in the world by getting 1 million users in just 5 days, there was a red alert going on inside Google to tackle this situation, as they considered ChatGPT to have the potential to disrupt searches which forced them to work on Bard to compete with GPT-3.

Google Bard with its advanced language capabilities can provide quick and accurate responses to customer queries, freeing up humans to focus on more complex tasks and cutting millions of jobs in the process. Being a part of Google Cloud’s AI platform it’ll provide a range of tools and services that will help Bard to be more powerful and have more data. It promises to bring new possibilities to the field of natural language processing, making it easier for people to interact with technology in a more intuitive and human-like way. Google’s expertise and resources will make it a strong competitor to OpenAI’s GPT-3 and will bring new breakthroughs and advancements in the field.

As the competition heats up in the field of language models, Baidu, often referred to as the “Google of China” is incorporating the “ERNIE bot” into its platform which is designed to rival OpenAI’s GPT-3. Baidu’s shares have skyrocketed following the announcement of its new chatbot. It is designed to improve the accuracy and speed of natural language processing. We can expect to see even more seamless interactions between humans and machines as Baidu’s Chatbot is set to launch in march.

Google has always been one of the most advanced AI companies in the world, but it doesn’t mean they can’t be beaten in their own game. Still, Google is the index of the Internet, the most powerful crawler in the world which indexes every piece of content on the Internet. Whereas ChatGPT only has information till 2021 making it not relevant or up to date. But Google, on the other hand, has the whole internet for itself and can train its AI in real-time about everything that’s happening, making it more relevant and up to date.

AI models such as ChatGPT can be used to generate fake news and spread misinformation that is difficult to distinguish from the real thing, which can lead to serious consequences. As a result to that, Amazon has warned its employees to be cautious when using AI language models and to verify the information before sharing it. Amazon has emphasized that employees should not use ChatGPT for tasks such as coding or customer service, as this could result in the sharing of confidential or sensitive information with the AI tool. Instead, Amazon has recommended that employees use other, more secure methods for these tasks.

AI models have been used in the past to create fake reviews and manipulate online platforms which arises the need for caution and vigilance when using ChatGPT. It is important for individuals and organizations to understand the potential dangers of these models and to be aware of their limitations. While ChatGPT can be useful for generating information and improving productivity, it is crucial to use it responsibly and verify the information before sharing it. This will help to ensure that the information being shared is accurate, and trustworthy while keeping the consequences of using ChatGPT minimized.

Universities have been stunned by ChatGPT’s writing skills and its ability to express complex ideas in a clear and concise manner. However, The ChatGPT responses are only as good as the data it was trained on, and they may sometimes produce biased or misinformation. But, if the content is cross-checked and verified afterward, then what can be the issue, right?

A college student from Northern Michigan University might have been thinking the same thing but was recently caught using ChatGPT to write an academic paper. This poses a threat to the principles of academic integrity and the value of higher education. Resulting, In Bengaluru, the RV university has banned the use of the Artificial intelligence tool ChatGPT inside the campus. This decision was made to keep up with academic integrity during exams, lab tests, and assignments. The administration is taking measures to guarantee that students are not taking part in plagiarism by implementing surprise checks and requiring students to resubmit assignments if doubt of plagiarism arises.

AI-generated content should not be used to misrepresent original work or to gain an unfair advantage. Universities should find such ways to keep up with the nature and quality of education and ensure that students are learning and delivering original work. The use of Artificial Intelligence should be done mindfully and with caution to stay away from any negative impact or adverse consequences on instruction and academic integrity.